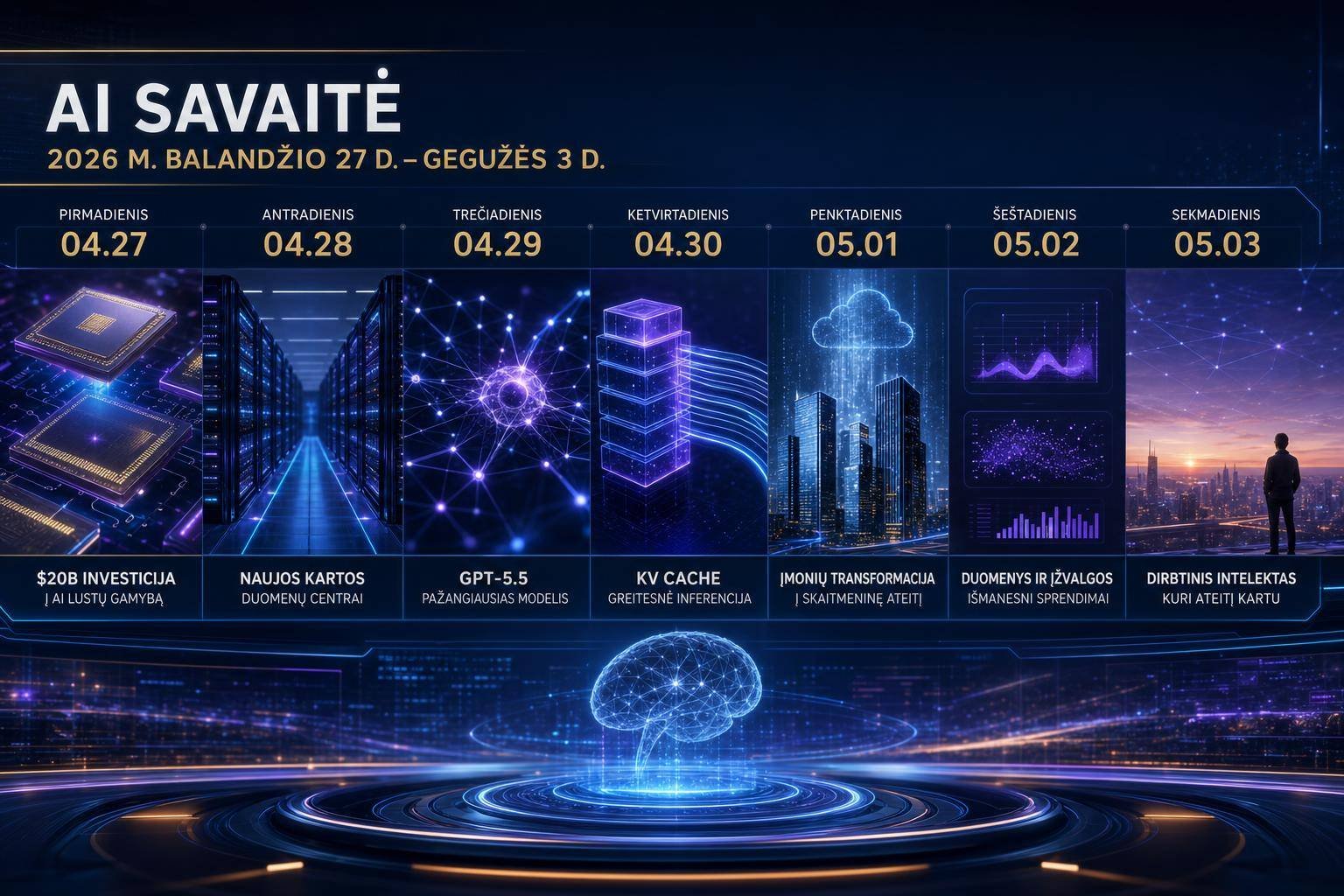

AI Weekly Roundup (Apr 27–May 3, 2026): Amazon’s $20B AI Chips, OpenAI GPT‑5.5, KV Cache Breakthroughs, and Enterprise Shifts

Hardware revenue is climbing, models are specializing, and the enterprise stack is getting reshaped. This week’s AI developments weren’t just incremental—they signaled how compute economics, model architectures, and platform control points are converging into the next phase of AI maturity.

In this AI weekly roundup, we break down the top moves—Amazon’s $20B custom silicon run rate, OpenAI’s GPT‑5.5 and Codex 2.0, NIST’s evaluation of DeepSeek V4 Pro, Meta’s humanoid robotics push, a KV cache compression leap, Microsoft–OpenAI’s contract change, and a Mayo Clinic breakthrough in early cancer detection—plus what they mean for your roadmap in the next 6–18 months.

1) Hardware: Custom Silicon Surges and KV Cache Tricks Speed Inference

Amazon’s Q1 2026 earnings disclosed that its custom AI chips—Trainium3 and Graviton4—have surpassed a $20B annual revenue run rate. That’s not just a bragging right; it’s confirmation that demand for specialized AI silicon is exploding as enterprises seek better total cost of ownership (TCO), tighter workload fit, and predictable supply.

Meanwhile, a Google research effort dubbed “TurboQuant” reportedly achieved a 6x compression of the LLM key-value (KV) cache with 3.5‑bit precision, delivering up to 8x faster inference in some scenarios. KV cache compression may sound niche, but it’s one of the most pragmatic levers to cut memory pressure and latency in production LLM serving.

Why custom silicon revenue matters now

- Workload specificity is the new default. General-purpose GPUs remain vital, but for training plateaus and inference-at-scale, the winners are increasingly co-designed stacks—chips tuned to the model and runtime.

- Vendor power is rebalancing. A $20B run rate for in-house chips signals to the market that hyperscalers will hedge their GPU dependence with vertically integrated alternatives. Expect more price transparency and multi-sourcing in enterprise RFPs.

- Capacity planning changes. If you can book capacity on Trainium3/Graviton4 with sustained pricing and performance guarantees, you can lock in inference economics and mitigate future GPU shocks.

- Tooling catch-up. The real unlock is software. Compiler and kernel maturity (e.g., Triton DSLs, XLA, and vendor-tuned libraries) determine whether teams capture the promised perf/$ from custom silicon.

For teams benchmarking new accelerators, line up representative workloads: long-context inference, multi-turn agents with tool use, RAG-heavy retrieval, and fine-tuning. Model-hosting services that expose chip choice and provide transparent per-token latency dashboards will show you when silicon differences actually matter.

If your organization leans into NVIDIA Blackwell, align your software pipeline with its memory and bandwidth profile. NVIDIA’s GB200 Grace Blackwell design focuses on high-bandwidth memory and interconnect throughput critical to LLM inference and mixture-of-experts (MoE) scaling.

KV cache compression: why 3.5-bit precision is a big deal

When you serve LLMs, you cache the attention “keys” and “values” from previous tokens so the model doesn’t recompute the entire sequence each step. This KV cache is often the dominant memory consumer in long-context or high-concurrency serving. Compressing it without wrecking accuracy can:

- Unlock higher concurrency on the same hardware

- Reduce memory bandwidth bottlenecks

- Lower tail latency for chat, agents, and streaming apps

- Slash inference costs

A 6x shrink with 3.5‑bit quantization implies hybrid precision: storing most of the cache at ultra-low bitwidth while preserving critical signal through calibration and dequantization tricks. It’s consistent with the arc of research on inference efficiency. See, for example: – FlashAttention, which improved attention compute and memory IO efficiency – Speculative decoding, which accelerates generation by drafting tokens with a smaller model and verifying with the larger one

If TurboQuant generalizes across architectures, you’ll see real-world wins in throughput, especially for: – Long-context customer support and legal review tools – Multi-agent planners that repeatedly attend to large histories – Streaming assistants with tools and function calling

Caveats: low-bit quantization can inject subtle failures—drift in long sequences, sensitivity to rare tokens, or degraded multi-hop reasoning. Treat this as a deployment optimization with guardrails, not a universal switch. Adopt A/B canaries and monitor hallucination rates, task accuracy, and tail-latency SLOs before scaling.

2) Models: OpenAI’s GPT‑5.5 and Codex 2.0 Get Specific

OpenAI launched GPT‑5.5 and Codex 2.0 with rolling updates through April 30. The headliner: GPT‑5.5 Pro targets precision in law and medicine using enhanced test-time compute—more deliberate reasoning at inference, selectively spending extra compute on harder problems.

This echoes OpenAI’s earlier work scaling “test-time compute” in reasoning models, a technique that pushes more thinking into inference rather than just training. For background on the approach and why it matters for complex tasks, see OpenAI’s write-up on test-time reasoning in its o1 system design here.

What “more thinking at inference” changes

- Cost model shifts. Instead of flat per-token costs, inference becomes elastic: easy queries run cheap, tough ones invoke deeper chains-of-thought or self-consistency checks.

- Governance pressure increases. For high-stakes use cases (e.g., medical summaries, legal memo drafting), you need auditable reasoning traces. Test-time compute can improve accuracy but also adds nondeterminism if not controlled.

- Prompting evolves. Tool use, citations, and structured outputs become the default. You’ll want schemas and validators to catch drift early.

For regulated domains, require: – Deterministic modes where applicable – Source citation requirements (e.g., RAG retrieval or authoritative guideline linking) – “Stop conditions” for chain-of-thought depth – Human-in-the-loop checkpoints for clinical or legal adoption

Codex 2.0: intent-driven coding on GB200-class hardware

OpenAI’s Codex 2.0 emphasizes intent-driven coding and is optimized for NVIDIA GB200-class systems, where higher memory bandwidth, improved attention kernels, and larger parallelism windows accelerate code synthesis and multi-file reasoning.

Practical implications: – Developer productivity moves from “code completion” to “spec completion.” The better you express intent (requirements, invariants, tests), the more the model automates. – Tooling integration is everything. Great AI coding requires tight IDE hooks, reliable dependency resolution, and constant security scanning of generated code. – Infrastructure readies for longer contexts. Multi-repo reasoning benefits from KV cache compression and high-speed storage backends.

Security reminder: pair AI codegen with continuous SAST/DAST and guardrails for secret leakage. OWASP’s guidance on LLM application risks is a good reference—see the OWASP Top 10 for LLM Applications.

3) Evaluation and Competition: NIST Consortium and DeepSeek V4 Pro

NIST’s consortium evaluated DeepSeek V4 Pro and deemed it China’s top open-weight model, estimating an ~8‑month gap to frontier U.S. models like GPT‑5, with strong cost-efficiency in reasoning. While results, tasks, and exact methodology matter, the signal is clear: open-weight models are closing the practical gap for many workloads.

Two strategic takeaways: – Open weights ≠ toy models. With the right fine-tunes, retrieval, and safety layers, open weights can meet enterprise quality bars at a fraction of proprietary TCO. – Standardized evaluation is overdue. Benchmark cherry-picking still runs rampant. That’s where institutions like NIST can help. The U.S. AI Safety Institute Consortium provides a forum for shared methodologies and red-teaming resources—learn more on NIST’s program page here.

Open-weight vs. closed: a pragmatic comparison

- Benefits of open-weight models:

- Control: self-hosted deployment, data locality, private fine-tuning

- TCO: lower per-token cost for steady-state workloads

- Extensibility: domain adapters, custom safety layers, on-prem support

- Risks and limitations:

- Maintenance burden: you own patching, upgrades, and observability

- Security: you must implement your own isolation, prompt injection defenses, and supply chain scanning for weights and datasets

- Quality variance: frontier performance in edge cases may still favor top proprietary models

Where to use open weights first: – High-volume, narrow-domain assistants (support, finance ops) – Structured extraction from known document types – On-prem RAG for sensitive corpora – Offline/edge inference where cloud dependency is impractical

4) Platforms and Devices: Meta’s Humanoid Ambition and the App‑Free AI Phone

Meta acquired Assured Robot Intelligence on May 1 and folded it into Meta Superintelligence Labs to build an AI platform for humanoid robotics—think “Android for robots.” The mission: a unified, hardware-abstracted stack for perception, control, memory, and policy updates across a heterogeneous ecosystem of bipedal and mobile robots.

Simultaneously, OpenAI is exploring hardware partnerships with MediaTek and Qualcomm for an app-free AI smartphone concept by 2028. The idea: a conversational, context-aware interface that replaces the icon grid with intent-driven agents.

Why a general-purpose robotics platform is a big deal

- Developer leverage. A stable robotics OS would let developers write once and deploy broadly, making embodied AI viable beyond labs.

- Data network effects. Shared teleoperation logs, simulation-to-real (sim2real) policies, and safety cases could compound across vendors.

- Safety architecture. Centralized policy layers allow standardized guardrails for power management, safe motion primitives, and fail-safe behaviors, supported by industry and standards bodies.

Key research and infrastructure pieces: – Simulational diversity (procedural tasks, terrains, sensor noise) – Multimodal foundation models for perception and state estimation – Real-time control loops with latency guarantees – Onboard vs. edge/cloud policy balancing, with over-the-air updates and robust rollback

App-free AI phone: opportunity and risk

- Opportunities:

- Intent-first UX reduces friction: “Plan my trip,” not five app hops

- Background agents that proactively handle tasks given permissions

- Multimodal capture (voice, camera, sensors) feeding private on-device models

- Risks:

- Privacy and consent. Always-on agents must implement explicit boundaries and revocation UX

- Attack surface. Agents with system privileges require hardened sandboxes and transparent permissions

- Platform governance. Who decides which agents can run continuously, and what telemetry is allowed?

If your product relies on mobile distribution, start prototyping content and flows that are agent-invokable via intents and schema-bound APIs. Partner early with silicon vendors who provide on-device AI acceleration and confidential computing within the SoC (Qualcomm and MediaTek both expose robust AI runtimes and secure enclaves in their developer stacks).

5) Enterprise and Healthcare: Multi‑Cloud Flexibility and Earlier Cancer Detection

The Microsoft–OpenAI exclusivity period reportedly ended on April 29, opening the door to multi-cloud sales where OpenAI’s offerings can be accessed beyond Azure. Enterprises that previously standardized on Azure OpenAI now have stronger negotiation leverage and portability options. For context on the enterprise integration patterns most teams use today, see the Azure OpenAI Service documentation.

Practical implications: – Multi-sourcing becomes default. Expect RFPs that require equivalent SLAs across at least two clouds or one cloud plus on-prem inference. – Data and model portability matter. Schema-compatible APIs, vector DB abstractions, and orchestration layers (LangChain/LlamaIndex/serverless runtimes) will help avoid lock-in. – Governance must be cloud-agnostic. Centrally manage secrets, policies, prompt libraries, and audit logs independent of a single provider.

On the healthcare front, Mayo Clinic reported promising results from REDMOD, an AI system that can detect pancreatic cancer up to 16 months earlier than standard detection windows. Pancreatic cancer is notoriously lethal due to late diagnosis, so any validated early detection method could be a lifesaver. For background on Mayo Clinic’s pancreatic cancer research programs, visit the institution’s research overview here.

What to look for before clinical deployment: – Prospective validation across multiple sites – Demographic fairness and subgroup analysis – Robustness to scanner differences and EHR variability – Clear actionability: defined care pathways upon positive flags – Regulatory posture and documentation for clinicians

Healthcare buyers should insist on transparent evaluation methods, out-of-distribution testing, and clear incident reporting channels. Model updates must be versioned with rollback capability and post-market monitoring.

6) How to Act on This Week’s AI Moves: Practical Guidance

Use this section to translate the week’s news into concrete steps for your 30‑, 90‑, and 180‑day plans.

For CIOs and CTOs: Chip strategy, TCO, and procurement

- Benchmark beyond FLOPs. Measure end-to-end job throughput, memory-bound slowdowns, and concurrency under realistic traffic.

- Write multi-sourcing into contracts. Require equivalent functionality across two providers (e.g., OpenAI API + an open-weight fallback) to prevent outages from halting operations.

- Negotiate transparent per-token and per-context pricing. If models leverage elastic test-time compute, ask for guardrails on max inference budget and per-request caps.

- Build an LLM serving reference architecture that is hardware-aware. Include KV cache compression toggles, mixed-precision kernels, and autoscaling that accounts for context length, not just QPS.

- Plan for portability. Adopt orchestration layers and model registries that let you swap providers without application rewrites.

For ML and platform teams: Performance engineering checklist

- Enable KV cache optimizations conditionally. Roll out quantized caches behind a feature flag; track task accuracy, latency SLOs, and hallucination deltas.

- Measure the performance of “hard queries.” Segment logs by request difficulty to observe where test-time compute budgets get consumed; cache intermediate reasoning traces if privacy allows.

- Co-design with your hardware. If you’re deploying on GB200-class systems, ensure attention kernels and memory planners are tuned; validate throughput with long-context prompts and tool-calling workloads.

- De-risk with canaries. Before flipping a switch globally, mirror 1–5% of production traffic to new quantization or new silicon, checking for regression in domain-specific KPIs.

For security and risk teams: Secure-by-design AI

- Threat model agents. Agents with file, network, and tool access must run in isolated sandboxes with least-privilege policies.

- Apply LLM-specific controls. Use prompt filters, output validation, and retrieval whitelists to counter injection and data exfiltration attacks. The OWASP Top 10 for LLM Applications highlights common pitfalls and mitigations.

- Harden the supply chain. Scan model artifacts, dependencies, and container images; verify signatures for model weights. Align with CISA’s Secure by Design principles where applicable.

- Log everything responsibly. Retain prompts, tool calls, and model versions tied to requests, with strict access controls and redaction for sensitive inputs.

For product leaders: From features to outcomes

- Productize “spec-first” development. For Codex-class tools, force precise requirements (acceptance tests, contracts, invariants) as the input; track defect rates and PR review time saved.

- Design for an agent-first mobile future. Expose key actions via schemas that a system agent can invoke (create ticket, pay invoice, schedule call), with clear permission prompts and revocation flows.

- Integrate citations. In legal and medical tasks, require models to attach authoritative references. Agents should surface source snippets and confidence so users can verify quickly.

For healthcare innovators: Evidence before excitement

- Demand external validation. Don’t deploy screening tools to patient populations without peer-reviewed, prospective, multi-site validation.

- Bias and subgroup checks. Verify model performance across age, race, sex, and comorbidities; tune thresholds per subgroup if needed.

- Close the loop. Tie positive screens to care pathways with clear clinician decision support; track downstream outcomes to refine thresholds and reduce false positives.

Common mistakes to avoid

- Over-indexing on synthetic benchmarks. Simulate real workloads—long contexts, interruptions, tool use, and multi-agent orchestration.

- One-provider dependence. Avoid architectures that crumble if a single API or region goes down.

- Ignoring quantization edge cases. Validate mission-critical tasks under quantized KV cache; watch for drift in multi-step reasoning.

- Unbounded test-time compute. Set per-request budgets and alerts; unexpected spikes can blow your cost model.

7) Competitive Dynamics: Reading Between the Lines

- Hyperscaler chips will pressure GPU margins. As Amazon proves real revenue with Trainium3/Graviton4, expect the other hyperscalers to expand their silicon roadmaps and incentive programs for customers who commit to their stacks.

- Efficiency work compounds. KV cache compression, better attention kernels, and speculative decoding stack multiplicatively. Teams that aggressively adopt these will widen their cost/performance moat.

- Open weights are now a serious enterprise option. With credible evaluations and smart safety layers, the open-weight path will win on TCO for defined tasks, even if closed models lead on edge-case intelligence.

- Platform control is shifting to agents. If an app-free phone materializes, mobile discovery and engagement will flow through intent brokers and system agents. Start designing for “callable” experiences.

FAQ

Q1: What is a KV cache and why does compressing it speed up LLMs? A: The KV cache stores the intermediate attention “keys” and “values” from previously generated tokens so the model can reuse them instead of recomputing. Compressing the cache reduces memory usage and bandwidth, which are frequent bottlenecks in inference, especially for long contexts and high concurrency, leading to lower latency and higher throughput.

Q2: How is test-time compute different from just using a larger model? A: Test-time compute spends more inference cycles on hard problems (e.g., deeper reasoning, self-consistency checks) without permanently increasing the model’s size. It’s an elastic cost: trivial queries stay cheap, while complex ones get extra “thinking,” often improving accuracy in high-stakes domains.

Q3: Should enterprises switch clouds now that Microsoft–OpenAI exclusivity has ended? A: Not necessarily switch, but renegotiate and architect for portability. Multi-sourcing (two clouds or one cloud plus self-hosted open weights) strengthens resilience and pricing power. Abstract your orchestration, vector stores, and prompt libraries to reduce switching friction.

Q4: What’s the difference between open-weight and open-source models? A: Open-weight models make the trained parameters available for use and fine-tuning, often under restrictive licenses. Open-source models release both code and weights under permissive terms. For enterprises, “open weight” is often enough to self-host with internal safety and monitoring layers.

Q5: Are humanoid robots relevant to most businesses yet? A: Not broadly. But advances in a common robotics platform could accelerate time-to-value for warehousing, manufacturing, and last-meter logistics. For most companies, the near-term value is in simulation, teleoperation support tools, and embodied AI pilots in constrained environments.

Q6: How should healthcare systems evaluate AI claims like early cancer detection? A: Require prospective, multi-site validation; subgroup performance analysis; pre-registered endpoints; and clear clinical pathways. Ensure regulatory alignment and post-deployment monitoring before scaling.

Conclusion: The Week AI Got More Practical—and More Strategic

This AI weekly roundup underscored a bigger pattern: hardware specialization is translating into real revenue and performance; models are trading raw breadth for domain precision with test-time compute; and platforms are eyeing the interfaces—robots and phones—where agents will become the default UX.

For leaders, the playbook is clear: – Pilot hardware-optimized serving with KV cache compression, but validate accuracy under real workloads. – Adopt test-time compute where precision pays—paired with deterministic modes, citations, and human review in regulated use. – Hedge with open weights for known workloads to control TCO and improve data locality. – Negotiate multi-cloud and portability now, before platform shifts lock in new dependencies. – In healthcare and other high-stakes fields, demand rigorous evidence, safety layers, and clear escalation paths.

The signal this week wasn’t hype; it was execution. If you align architecture, procurement, and product around these shifts, you’ll ship faster, spend smarter, and be ready when agents and new devices redefine how users get things done.

Discover more at InnoVirtuoso.com

I would love some feedback on my writing so if you have any, please don’t hesitate to leave a comment around here or in any platforms that is convenient for you.

For more on tech and other topics, explore InnoVirtuoso.com anytime. Subscribe to my newsletter and join our growing community—we’ll create something magical together. I promise, it’ll never be boring!

Stay updated with the latest news—subscribe to our newsletter today!

Thank you all—wishing you an amazing day ahead!

Read more related Articles at InnoVirtuoso

- How to Completely Turn Off Google AI on Your Android Phone

- The Best AI Jokes of the Month: February Edition

- Introducing SpoofDPI: Bypassing Deep Packet Inspection

- Getting Started with shadps4: Your Guide to the PlayStation 4 Emulator

- Sophos Pricing in 2025: A Guide to Intercept X Endpoint Protection

- The Essential Requirements for Augmented Reality: A Comprehensive Guide

- Harvard: A Legacy of Achievements and a Path Towards the Future

- Unlocking the Secrets of Prompt Engineering: 5 Must-Read Books That Will Revolutionize You