Pentagon Threatens to Cut Ties with Anthropic Over AI Usage Terms: What It Means for National Security, Tech Policy, and the Future of AI Governance

Note: This article discusses Anthropic, maker of Claude. This post was drafted with the help of an AI writing assistant.

If you thought AI policy debates were just for think tanks and Twitter threads, think again. The Pentagon has reportedly threatened to end work with Anthropic—the company behind the popular AI service Claude—over disagreements about how its AI models can be used for national security. That’s not only rare; it’s a flashing red sign that the uneasy alliance between government and AI labs is entering a new, more confrontational phase.

According to reporting and analysis from the Center for Strategic and International Studies (CSIS) and Axios, the dispute centers on whether Anthropic’s usage policies and technical commitments align with what the U.S. government wants from advanced AI in defense and intelligence contexts. Anthropic, for its part, says it’s been engaging in good-faith talks to make sure its models support national security “in line with what the systems can reliably and responsibly accomplish.”

So what’s actually at stake? A lot—ranging from how governments buy and operate cutting-edge AI, to how AI companies hold the line on safety commitments, to whether foundation models will become standard tools in national security missions. Here’s what you need to know, why it matters, and where this may go next.

Source video: CSIS/Axios discussion (published Feb 19, 2025): https://www.youtube.com/watch?v=qHV714ZxLA8

The Flashpoint: Pentagon vs. Anthropic, and an Unusual Threat

- The report: In a public discussion hosted by the CSIS Wadhwani Center for AI and Advanced Technologies and Axios, senior advisor Gregory Allen (formerly director of policy in the Pentagon’s AI office) analyzed a conflict in which the Pentagon threatened to end work with Anthropic over terms and conditions for using its AI models in national security roles.

- Anthropic’s response: The company said it engaged in good-faith conversations to ensure its models could continue to support national security aims consistent with what they can “reliably and responsibly” do.

- Why this is unusual: Defense procurements don’t typically play out in public, and open threats to terminate engagement with a leading AI lab over its usage terms signal a new willingness by government agencies to push vendors to align with mission requirements—even when that clashes with corporate safety policies or brand commitments.

Useful links: – CSIS Wadhwani Center: https://www.csis.org/programs/wadhwani-center-ai-and-advanced-technologies – Gregory C. Allen (CSIS): https://www.csis.org/people/gregory-c-allen

What’s the Core Disagreement?

The fault line appears to be where policy meets product reality:

- Usage policies: Anthropic, like other frontier model developers, maintains policies limiting certain sensitive or harmful uses, especially around weapons, targeted surveillance, and escalation risks. See Anthropic’s stated policies: https://www.anthropic.com/policies.

- Government mission requirements: The Pentagon needs reliable access to capabilities that support a wide array of national security tasks (analysis, planning aids, logistics, cyber defense support, multilingual intelligence triage, training simulation, and more). Those needs often come with requirements for availability, auditability, security, special deployment environments, and sometimes use cases that bump up against commercial providers’ acceptable-use restrictions.

Anthropic’s public framing—keeping capabilities aligned with what models can “reliably and responsibly” accomplish—suggests the company is drawing lines around reliability thresholds, safety filters, and where it believes model outputs should be gated by human oversight.

Why This Standoff Matters

- It sets a precedent: Foundation model vendors and defense agencies are still figuring out the rulebook. How this dispute is resolved will influence negotiations across the entire ecosystem.

- It tests “Responsible AI” in practice: Can a company keep meaningful safety guardrails while still supporting high-stakes national security missions?

- It affects procurement strategies: If one lab’s terms are too restrictive, the government may shift to vendors willing to offer special versions, on-prem deployments, or carve-outs—potentially reshaping market shares.

- It pressures policy convergence: Expect more alignment (or open conflict) among frameworks like the DoD’s Responsible AI principles, NIST’s AI Risk Management Framework, and labs’ internal safety standards.

Reference frameworks: – DoD Responsible AI: https://www.ai.mil/ – NIST AI Risk Management Framework: https://www.nist.gov/itl/ai-risk-management-framework – U.S. AI Executive Order (Oct 2023): https://www.whitehouse.gov/briefing-room/presidential-actions/2023/10/30/executive-order-on-safe-secure-and-trustworthy-development-and-use-of-artificial-intelligence/

The Pentagon’s Perspective: What Defense Actually Needs

When the Department of Defense evaluates AI services, a handful of common requirements tend to dominate:

- Reliability and mission assurance: Predictable performance under stress; graceful degradation; clear error characteristics; and well-defined boundaries for what the model can and cannot do.

- Secure deployment: Options for air-gapped or sovereign environments, strong access controls, and model governance that supports classified or sensitive workflows.

- Assured access and availability: SLAs, redundancy, surge capacity, and the ability to continue operations despite cloud outages or vendor-side policy shifts.

- Auditability and traceability: Logs, versioning, change control, and the capacity to explain or reproduce outputs under review.

- Safety and oversight: Human-in-the-loop control for risk-prone tasks; model safety filters aligned with mission needs; and configurable policy layers.

- Legal and policy compliance: Export controls, data protection, privacy, procurement law, and consistent adherence to the DoD’s Responsible AI principles.

Tension emerges when a lab’s acceptable use policy categorically disallows certain mission-adjacent functions—or when a lab limits deployment models that defense agencies see as necessary for security and reliability.

Anthropic’s Safety Commitments: Where the Lines Are Drawn

Anthropic has been public about its focus on alignment and harm reduction, building policies to limit dangerous applications and guide safe use. While the specifics of the Pentagon-Anthropic dispute aren’t fully public, some likely friction points include:

- Prohibited or sensitive uses: Restrictions on targeted violence, weaponization, or invasive surveillance can collide with particular defense-support workflows, even if the government’s planned use is lawful, defensive, or highly supervised.

- Reliability gating: If a model is judged insufficiently reliable for a high-stakes task, Anthropic may want to restrict that application, add more guardrails, or require human review—potentially slowing certain mission timelines.

- Model access modes: Tighter control over fine-tuning, tooling integration, or custom deployments is often part of labs’ safety posture; defense users frequently want the opposite for sovereignty and performance.

- Dynamic policy updates: Safety policies evolve rapidly. Defense clients tend to prefer contractual stability, with predictable change control and negotiated exceptions.

You can review Anthropic’s public policies here: https://www.anthropic.com/policies

This Isn’t the First Big Tech–Pentagon Feud—But It Is Different

A quick look in the rearview:

- Google and Project Maven (2018): Internal employee pushback led Google to step back from a Pentagon AI imagery analysis program, spotlighting the culture clash between Silicon Valley and the defense community. Coverage: New York Times

- JEDI cloud saga (2019–2021): The contentious $10B cloud contract raised questions about single-vendor strategy, legal challenges, and how the Pentagon should procure at scale.

- Evolving AI lab policies (2023–2025): Leading labs have adjusted their acceptable use policies and deployment models—sometimes controversially—as they navigate real-world demand from enterprise, public sector, and defense.

What’s new now is the centrality of general-purpose, foundation models. Unlike bespoke systems for narrow tasks, these models are flexible, fast-improving, and adaptable—making them incredibly useful but also uniquely hard to govern. That makes acceptable use boundaries, deployment controls, and auditability far more significant—and more negotiable.

Likely Sticking Points in Pentagon–Anthropic Negotiations

While we can’t see the confidential clauses, expect friction around:

- Use case carve-outs: Defining exactly which national security tasks are permitted, which are prohibited, and which require human review or special controls.

- Deployment model: Cloud vs. on-prem vs. air-gapped; who operates and updates the model; how safety layers and content filters are configured in secure environments.

- Reliability thresholds: Documented performance expectations, red-team results, and gating for high-risk tasks (e.g., time-sensitive targeting is very different from research assistance).

- Audit and logging: What’s captured, where it’s stored, who can access it, and how logs are protected in classified contexts.

- Data governance: Data retention, deletion, and privacy guarantees; protections against training-on-customer-data unless explicitly approved.

- Change management: When and how safety policies can change; whether government users get a negotiated grace period or stable release channel.

- Indemnification and liability: Who bears risk if an AI-enabled decision goes wrong; how warranties and disclaimers are framed in high-stakes contexts.

- Export control and access control: Guardrails on who can use what; preventing model leakage while enabling coalition and partner operations.

These are nontrivial issues for any enterprise deal; in defense contexts, they’re magnified by mission urgency and legal constraints.

The Broader Policy Backdrop

- Responsible AI in Defense: The DoD has laid out principles and is implementing practical guidance for testing, evaluation, verification, and validation (TEVV). See: https://www.ai.mil/

- NIST AI RMF: The U.S. government’s flagship voluntary framework helps organizations manage AI risks across design, development, deployment, and use. See: https://www.nist.gov/itl/ai-risk-management-framework

- Executive and legislative activity: The White House AI Executive Order and ongoing congressional interest point to more formalized expectations around safety testing, disclosure, and critical infrastructure use.

As these frameworks mature, vendors and agencies will have clearer common language—which should, in theory, reduce conflicts. In practice, the speed of frontier model development means terms and policies still shift faster than procurement cycles can accommodate.

Scenarios: Where This Could Go Next

- Best-case resolution: Anthropic and the Pentagon agree on a tailored usage regime—clear carve-outs, human-in-the-loop for sensitive tasks, robust audit/logging, stable policy change controls, and deployment options that satisfy security needs. The result becomes a model template for other lab–agency deals.

- Partial detente: The Pentagon diversifies across multiple vendors, using Anthropic for specific workflows where safety alignment is strongest, while tapping other providers (or in-house systems) for use cases Anthropic won’t support.

- Full break: The Pentagon ends engagements and leans into alternative vendors offering more permissive policies or sovereign deployments. That could incentivize a market shift toward vendors who prioritize configurability and on-prem control—potentially at the expense of uniform safety guardrails.

- Policy ripple: Regardless of outcome, this dispute accelerates the creation of standard contract language on AI acceptable use, reliability thresholds, auditability, and safety gating for high-stakes domains.

Implications for Enterprises and Public Sector Teams

Even outside defense, this episode offers practical takeaways:

- Expect policy negotiation: As AI moves deeper into regulated and high-risk domains, acceptable-use terms will be a first-class part of any deal—not boilerplate.

- Reliability thresholds matter: Tie use cases to documented performance and monitoring plans. Require red-team evidence and explicit model limitations in writing.

- Demand configurability: Ask for deployment options, policy controls, and safe “profiles” tuned to your risk posture.

- Lock in change control: Make sure you have notice periods and stable channels for model and policy updates.

- Build human oversight: For consequential decisions, design human-in-the-loop workflows and keep audit trails—because you’ll need to explain outcomes.

A Practical Checklist for Government-Facing AI Contracts

- Scope and purpose: Precise use cases, with explicit prohibited, permitted, and “requires review” categories.

- Safety and reliability: TEVV artifacts, red-team reports, and thresholds for gating high-risk tasks.

- Deployment: Cloud vs. on-prem; enclave/air-gap options; model update cadence; content filter configuration in secure environments.

- Data governance: Retention, deletion, training-use consent, and isolation guarantees.

- Logging and audit: Format, custody, retention windows, incident response triggers, and access controls.

- Change management: Version pinning, deprecation timelines, and policy-update grace periods.

- Access and export control: Role-based access, tenant isolation, and guardrails for international collaboration.

- Legal and liability: Indemnities, warranties, and limitations tailored to mission risk.

- Ethics and oversight: Mapping to DoD Responsible AI principles and NIST AI RMF; documented human-in-the-loop procedures.

What Makes the Foundation Model Era So Hard to Govern

- General-purpose breadth: One model can touch dozens of mission areas, each with different risk profiles, making one-size-fits-all policy nearly impossible.

- Rapid capability shifts: Frontier models improve on monthly or even weekly cadences; procurement and compliance cycles are far slower.

- Behavioral variance: Outputs can fluctuate based on prompts, context windows, and fine-tuning—complicating reliability guarantees.

- Dual use: The same capability that accelerates analysis can enable misuse if not carefully governed—raising the stakes for acceptable use and deployment controls.

These realities create the structural tension we’re now seeing spill into public view.

Timeline and Signals to Watch

- Near-term statements: Watch for clarifying statements from the Pentagon and Anthropic about whether they’ve found a compromise or are parting ways.

- Procurement patterns: Track whether defense agencies move toward vendors offering sovereign deployments, model leases, or more permissive policy controls.

- Standardization push: Expect more agencies to publish AI contract language templates covering safety, logging, and change management.

- International echoes: Allies with their own AI ambitions will face similar negotiations; NATO and partner nations may coordinate approaches.

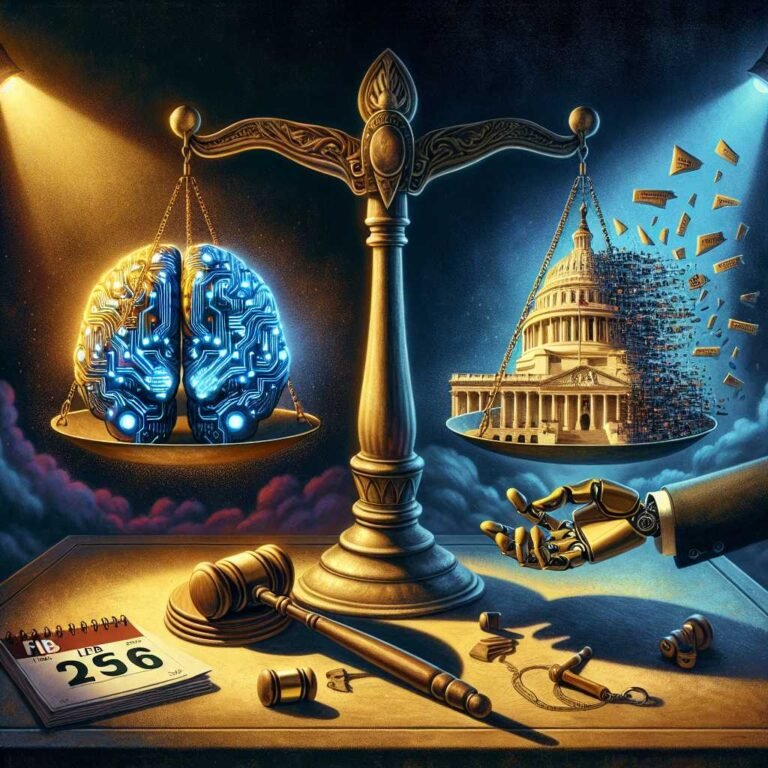

What This Means for AI Governance Going Forward

The Pentagon–Anthropic standoff underscores a core truth: The next phase of AI adoption won’t be gated by raw capability alone. It will be determined by governance—who sets the rules, how flexibly those rules can adapt, and how credibly vendors can promise both safety and mission performance.

If the parties land on a principled, practical compromise, it could become a blueprint for responsible AI in high-stakes government domains. If not, it may catalyze a split market—some vendors vying for maximum configurability and sovereign control, others doubling down on stricter, uniform safety regimes—leaving agencies to mix-and-match at the portfolio level.

Either way, the era of “click-through terms” governing critical AI is over. Expect detailed, negotiated, and enforceable AI usage frameworks to become standard operating procedure.

FAQs

Q: Did the Pentagon actually end its work with Anthropic?

A: As of the referenced CSIS/Axios discussion (Feb 19, 2025), the news centered on a threat to end work amid a dispute over usage terms. Anthropic said it was engaging in good-faith talks. Watch for updated statements from both parties for the final outcome. Source: CSIS/Axios video.

Q: What kinds of AI uses does the Pentagon typically want from commercial models?

A: Common areas include research assistance, multilingual document triage, training and simulation support, logistics planning, software and cyber defense tooling, and decision-support analytics—all ideally with strong oversight, logging, and deployment security.

Q: Why would an AI company restrict national security use if the government asks?

A: Safety commitments, legal constraints, and concerns about model reliability for sensitive, time-critical tasks can motivate restrictions. Companies may also aim for consistent policies across customers, to prevent misuse or destabilizing applications.

Q: Can safety guardrails coexist with national security missions?

A: Yes, with careful design. Human-in-the-loop oversight, strict auditability, deployment in secure environments, explicit use-case gating, and reliability thresholds can enable responsible use. That’s the heart of many ongoing negotiations.

Q: What frameworks guide “Responsible AI” for defense?

A: The DoD’s Responsible AI principles and implementation work, NIST’s AI Risk Management Framework, and guidance stemming from the 2023 U.S. AI Executive Order are key reference points. See: AI.mil, NIST AI RMF, and the Executive Order.

Q: What should enterprises learn from this?

A: Treat AI acceptable use and deployment architecture as first-class contract matters. Define permitted/prohibited uses, lock in change controls, require TEVV evidence, and insist on auditability. Don’t deploy frontier models in high-stakes workflows without clear reliability thresholds and human oversight.

Q: Is this legal advice?

A: No. This article provides general information and analysis. Organizations should consult legal counsel and security experts for contract and compliance decisions.

The Takeaway

The Pentagon’s threat to end work with Anthropic over AI usage terms isn’t just a contract dispute—it’s a bellwether for how frontier AI will be governed in the highest-stakes settings. The path forward will be written in the fine print: explicit use-case boundaries, reliability thresholds, deployment controls, and stable change management. Whether through compromise or divergence, this episode will shape how governments, AI labs, and critical industries structure responsible AI at scale.

Discover more at InnoVirtuoso.com

I would love some feedback on my writing so if you have any, please don’t hesitate to leave a comment around here or in any platforms that is convenient for you.

For more on tech and other topics, explore InnoVirtuoso.com anytime. Subscribe to my newsletter and join our growing community—we’ll create something magical together. I promise, it’ll never be boring!

Stay updated with the latest news—subscribe to our newsletter today!

Thank you all—wishing you an amazing day ahead!

Read more related Articles at InnoVirtuoso

- How to Completely Turn Off Google AI on Your Android Phone

- The Best AI Jokes of the Month: February Edition

- Introducing SpoofDPI: Bypassing Deep Packet Inspection

- Getting Started with shadps4: Your Guide to the PlayStation 4 Emulator

- Sophos Pricing in 2025: A Guide to Intercept X Endpoint Protection

- The Essential Requirements for Augmented Reality: A Comprehensive Guide

- Harvard: A Legacy of Achievements and a Path Towards the Future

- Unlocking the Secrets of Prompt Engineering: 5 Must-Read Books That Will Revolutionize You