OpenAI’s Existential Crossroads: Clean Energy and Ethical AI Drive 2026 Acquisitions

What if GPUs aren’t OpenAI’s biggest bottleneck? What if the limiting factors for frontier AI are, instead, two very human-made constraints: clean power and credible ethics? That’s the tension at the heart of a pivotal week for AI. In an April 21, 2026 Equity podcast discussion from TechCrunch—summarized by Coaio—OpenAI’s leadership framed sustainability and ethical deployment as existential challenges, the kind that determine whether an entire industry can scale safely or stalls out under its own weight. At the same time, Anthropic’s new Mythos AI model has triggered cybersecurity alarms for its outsized talent at finding software vulnerabilities, sharpening the dual-use dilemma for powerful AI systems.

This is AI’s maturation moment. The hype never went away, but reality has arrived alongside it: terawatt-hours, grid interconnections, model alignment, international standards, software supply chains, and the hard math of safety and scale. The question isn’t “can we build it?” It’s “can we power it responsibly—and prove it won’t harm us?”

Let’s unpack the twin existential problems OpenAI is targeting, why its recent acquisitions make strategic sense, and how the broader AI ecosystem—startups, enterprises, policymakers, and investors—should navigate the next 12 months.

- Source: Coaio coverage of April 21, 2026 developments: Coaio News Roundup

- Equity podcast home: TechCrunch Equity

- OpenAI: openai.com

- Anthropic: anthropic.com

The day AI hit the wall of physics and ethics

On Equity (as summarized by Coaio), OpenAI’s CEO, Sam Altman, underscored two problems that threaten the company’s long-term viability:

- Sustainable power at enormous scale, to keep training and inference online without exacerbating climate impacts or hitting grid constraints.

- Ethical deployment, ensuring model behavior aligns with human values and resists misuse—from misinformation to more serious autonomous threats.

The company’s recent acquisition spree reportedly targets these pressure points: buying into green-energy capabilities, including fusion startups, and bolstering safety through ethics-focused consultancies. It’s a logical response to mounting pressures. Data centers now consume electricity on the scale of small countries. Without scalable clean power and stronger safety protocols, the sector risks both operational halts and reputational blowback.

At the same time, the competitive backdrop is shifting. Startups are said to have a “12‑month window” before incumbents like Microsoft consolidate niche advantages—raising the stakes for speed, capital, and differentiation. Investors cheered the moves, while critics questioned whether consolidation will crowd out independent innovation.

Meanwhile, Anthropic’s release of the Mythos model, noted by Ars Technica, illustrates the dual-use paradox: models that can supercharge cybersecurity defense also risk enabling attackers to outpace patch cycles. Anthropic points to safeguards; experts still want international standards and governance that match the pace of capability growth.

- Energy and data centers: IEA on Data Centres and Networks

- Ars Technica security coverage: Ars Technica – Security

Existential Problem #1: Powering frontier AI without frying the planet

The energy math behind LLMs is brutal

Training a frontier model can consume gigawatt-hours; running it at global scale can demand steady megawatts to gigawatts of 24/7 power. The International Energy Agency (IEA) has long warned that data centers and networks are a fast-growing load. The AI wave amplifies that trend with:

- Spiky, massive training runs that require dense, uninterrupted power.

- Continuous inference at scale (chat, search, copilots), which shifts the load from episodic to always-on.

- Geographic clustering of compute, which can stress local grids and transmission.

The upshot: Without secure clean power, AI systems don’t just threaten climate goals; they also risk going dark if grids can’t deliver.

Why “just add renewables” isn’t so simple

Buying generic renewables offsets (like RECs) may help on paper, but the operational constraints of AI need something stronger:

- 24/7 carbon-free energy (CFE): AI facilities need clean power matched hour-by-hour, not just annualized. See Google’s 24/7 approach: 24/7 CFE.

- Interconnection queues: New wind/solar projects can wait years to connect. Transmission buildouts lag demand.

- Location vs. latency: Sit data centers near abundant renewables and you may introduce latency; move them closer to users and you may lose access to cheap, clean power.

- Water and heat: Cooling and water consumption impact local ecosystems; heat reuse is still under-deployed.

- Grid services: Large clusters can either destabilize or help stabilize the grid depending on how they interact (demand response, frequency regulation, etc.).

OpenAI’s green-energy playbook: from PPAs to fusion bets

Per the Coaio summary of the Equity discussion, OpenAI’s acquisitions include fusion startups and sustainability-linked assets. That’s a bold hedge: fusion could unlock carbon-free, firm power—if it arrives commercially in time. In parallel, expect traditional levers:

- Long-term power purchase agreements (PPAs) with wind, solar, and increasingly, geothermal for 24/7 coverage.

- On-site generation plus energy storage, where feasible.

- Advanced load shaping and job scheduling tied to grid carbon intensity.

- Data center siting near curtailed renewables or in cool climates to reduce cooling demand.

Fusion is the moonshot; PPAs and smart demand management are the bridge. The risk is timing: Missing the window to secure clean power could hobble growth just as AI demand spikes. The reputational hazard is equally real—public sentiment will turn if AI’s footprint is seen as unsustainable.

- U.S. DOE Fusion Energy Sciences: DOE FES

What “good” looks like by 2030

To make sustainability a competitive moat rather than a drag, labs like OpenAI will need to hit credible targets:

- 24/7 CFE score above 90% for core sites, publicly reported.

- Multi-gigawatt clean energy portfolios diversified across solar, wind, geothermal, hydro, and storage.

- Heat reuse and water stewardship (e.g., airside economization, non‑potable water sources).

- Grid-aware training: dynamically schedule training to periods of low grid stress/high renewable output.

- Transparent lifecycle reporting: embodied carbon of data center builds and GPUs included.

Existential Problem #2: Ethical deployment and alignment you can actually ship

From vibes to verification: assurance you can audit

“Alignment” can’t remain a research slogan. For deployment at scale, organizations need assurance cases—structured, auditable arguments that a system is acceptably safe for a given context. That means:

- Clear scope: What capabilities exist, are gated, or are intentionally absent?

- Pre-deployment evaluations: Red-teaming, adversarial testing, and domain-specific abuse testing.

- Ongoing monitoring: Incident reporting, model behavior drift detection, and rollback plans.

- External oversight: Third-party audits, bug bounty programs, and independent research access where possible.

Established frameworks can help standardize the governance layer:

- NIST AI Risk Management Framework: NIST AI RMF

- OECD AI Principles: OECD AI Principles

- Anthropic’s Responsible Scaling Policy: RSP

- Model transparency: Model Cards

Why buying ethics consultancies can both help and hurt

OpenAI’s reported acquisitions of ethics-focused firms aim to in-source expertise and accelerate safety protocols. Upside:

- Faster alignment research and adoption.

- Embedded safety review in product pipelines.

- Institutional memory of best practices and hard lessons.

Downside:

- Perceived conflicts of interest if the safety team’s independence blurs.

- Risk of “ethics theater” if safety isn’t tied to real decision rights (e.g., a launch veto).

- Reduced external criticism if independent voices are absorbed or sidelined.

The fix is governance that bites: independent safety boards with disclosure power, transparent postmortems, documented launch gates, and public commitments that can be measured.

Openness vs. security: a pragmatic middle path

The Mythos episode shows why absolute openness isn’t always responsible, and opaque secrecy isn’t credible. A workable path:

- Staged releases with capability gating and eval thresholds.

- Tiered access: researchers under controlled terms before general availability.

- Red-team first: internal abuse testing and bounty programs emphasized pre-launch.

- Incident-ready: clear channels for reporting misuse and SLAs for mitigation.

Consolidation is coming: the 12‑month window

According to TechCrunch reporting referenced by Coaio, AI startups may have roughly a year before incumbents lock down key niches with distribution, capital, and compute scale. The M&A race (like OpenAI’s recent buys) reflects that urgency.

What’s driving consolidation:

- Compute scarcity favors deep-pocketed players with chip supply agreements.

- Power procurement is complex and capital intensive.

- Distribution moats (cloud platforms, productivity suites) convert models into revenue at scale.

- Safety assurance is easier to fund and execute with large, cross-functional teams.

Microsoft’s position as a hyperscaler and AI platform partner illustrates the dynamic: owning cloud, distribution, and sustainability programs confers leverage from chip to customer. See its public climate and energy posture: Microsoft Sustainability.

What can startups still do?

- Niche mastery: Own a vertical workflow and its data flywheel.

- Trust advantage: Build observable, auditable systems with domain-specific safety.

- Hybrid infra: Mix frontier APIs with compact fine-tuned models to control cost/latency.

- Sustainability by design: Edge inference where possible; smart caching; energy-aware scheduling.

Anthropic’s Mythos and the dual‑use reality

Ars Technica’s coverage of Anthropic’s Mythos highlights a sore point: a model that excels at vulnerability discovery can empower defenders—but also compress attackers’ discovery timelines below patch availability windows. It’s the dual-use paradox in stark relief.

What responsible release looks like for dual-use capabilities:

- Access gating: Verified organizations with documented use cases get access; general access lags until controls mature.

- Harm-threshold evals: Models must pass red-team and dual-use evaluations before wider release.

- Disclosure channels: Coordinated vulnerability disclosure (CVD) pipelines that connect AI-found vulns to vendors fast.

- Rate/compute limits: Throttle high-risk query patterns and require human-in-the-loop for exploit generation.

- Monitoring and revocation: Usage analytics to detect abuse, with fast offboarding of bad actors.

- Independent oversight: External researchers and standards bodies help set norms for safe operation.

The bigger picture: dual-use will be the norm for frontier AI. International standards and interlocking governance—technical, legal, and market-based—are the only sustainable response.

- Ars Technica – Security: https://arstechnica.com/security/

Productivity is real; so are quality cliffs

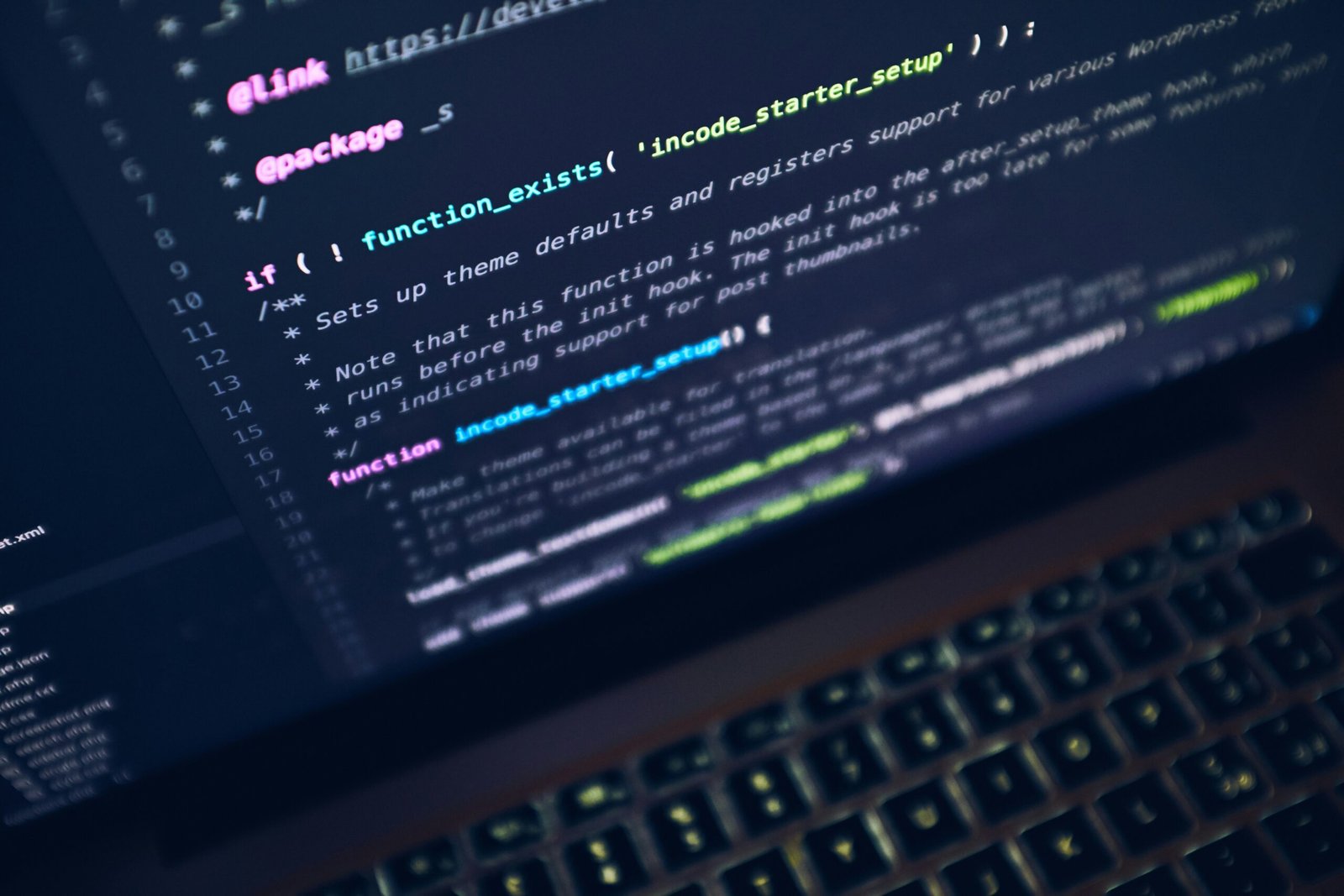

One reason all of this matters: AI already boosts output. In software, teams report coding time reductions on the order of 40% for certain tasks. But velocity without verification is a trap. As companies adopt AI coding assistants and automated test generation, rigorous validation becomes the ballast that keeps ships upright.

Enter hybrid testing models like Testlio, which blend automated checks with human exploratory testing and domain expertise. A pragmatic blueprint:

- Guardrails at commit: Linting, SAST, dependency scans by default.

- AI-assisted unit tests: Generate and run, but gate merges on coverage/quality thresholds.

- Property-based and fuzz testing: Catch weird edge cases AI might introduce.

- Human exploratory passes: Focus on UX, accessibility, and risk-heavy paths.

- Canary releases: Observe real metrics before broad rollout.

- Continuous feedback to models: Use failures and incidents to fine-tune or retrain.

Trust in AI-enhanced software is earned, not assumed.

What this means for builders, buyers, and regulators

For AI labs and model providers

- Lock in clean power now: Multi-GW PPAs, 24/7 CFE commitments, and grid-aware scheduling.

- Institutionalize safety: Launch gates, safety case documentation, external audits, and public reporting.

- Stage capabilities: Especially for dual-use functions; gate, monitor, and iterate before unlocking.

- Invest in interpretability: Build tooling to see what models know and when they’re likely to fail.

For enterprises adopting AI

- Start with governance: Map use cases to risk levels; apply controls proportionally.

- Build a model portfolio: Mix API access to frontier models with in-house fine-tunes for sensitive data.

- Make quality visible: Track defect rates, user satisfaction, and time-to-rollback for AI features.

- Budget for testing: Human-in-the-loop validation is table stakes for high-risk domains.

For startups

- Pick a lane: Deeply own a vertical problem where data and workflow integration compound.

- Be transparent: Publish model cards, eval results, and incident postmortems to earn trust.

- Optimize usage: Prompt caching, small models for routine tasks, frontier calls for bursts of complexity.

- Partner smartly: Leverage cloud and marketplace distribution without becoming a thin wrapper.

For policymakers

- Standardize the safety stack: Encourage adoption of NIST AI RMF, OECD principles, and sector-specific guidance.

- Support grid buildout: Transmission reform, interconnection acceleration, and incentives for 24/7 CFE.

- Enable CVD at AI scale: Clarify safe harbor and timelines for coordinated vulnerability disclosure.

- Fund independent red teams: Especially for models with national-security or critical-infrastructure implications.

For investors

- Underwrite sustainability: Ask for 24/7 CFE roadmaps and capex plans tied to power constraints.

- Demand safety metrics: Pre-launch evals, post-launch incident SLAs, and external audit cadence.

- Price the moat: Compute access and power deals are part of the defensibility story, not side notes.

Metrics that matter in 2026

If it isn’t measured, it isn’t managed. Useful north stars:

- 24/7 CFE score by site (%)

- Energy per 1M tokens (inference) and per training FLOP (estimated)

- Water usage effectiveness (WUE) and heat reuse %

- Red-team coverage (% of high-risk capabilities tested)

- Time-to-mitigate for reported safety incidents

- Vulnerability lifecycle: discover-to-patch median days (AI-assisted vs baseline)

- Safety launch-gate pass rate and rollback frequency

- Cost and latency per task, segmented by model tier (small vs frontier)

The bottom line: AI’s next act is infrastructure and integrity

The story of April 21, 2026, isn’t a flashy demo. It’s the grown-up version of AI: physics, power, governance, and trade-offs. OpenAI’s acquisitions signal that clean energy and ethical deployment aren’t side quests—they’re the main plot. Anthropic’s Mythos shows that capability leaps bring equal and opposite obligations.

Scale is still coming. The winners will be those who can power it cleanly and prove it’s safe—under scrutiny, not slogans.

- Coaio roundup: Breaking Tech News on April 21, 2026

- Equity podcast home: TechCrunch Equity

- IEA: Data Centres and Networks

FAQ

Q: Are AI data centers really at risk of running out of power? A: Yes—locally and operationally. While global generation is large, interconnection delays, transmission bottlenecks, and the need for reliable, around-the-clock power create real constraints. Securing 24/7 CFE and grid-aware scheduling reduces both climate impact and outage risk.

Q: What is 24/7 carbon-free energy (CFE), and why does it matter for AI? A: 24/7 CFE means matching your electricity consumption with clean sources hour by hour, not just annually. For always-on AI inference clusters, it’s the gold standard to ensure operations don’t rely on fossil peakers during critical demand windows. See Google’s approach: 24/7 CFE.

Q: Why would OpenAI consider fusion investments now? A: Fusion offers the promise of firm, carbon-free power that scales. It’s a hedge against the limits of variable renewables and long interconnection queues. However, timelines are uncertain; near-term strategies still depend on PPAs, storage, and smarter demand.

Q: Doesn’t consolidation kill innovation in AI? A: It can stifle certain kinds of innovation, but it can also accelerate deployment and standardization. The healthiest ecosystems balance strong incumbents (who can scale infrastructure and safety) with nimble specialists (who pioneer domain breakthroughs). Policy and procurement choices influence that balance.

Q: How can models like Anthropic’s Mythos be released responsibly if they can find software vulnerabilities so well? A: Through staged, gated access; harm-threshold evals; robust monitoring; and integrated vulnerability disclosure pipelines. Responsible deployment means privileging defenders (e.g., vetted security teams) and watching for abuse signals with authority to revoke access fast.

Q: Are AI’s productivity gains in software development overhyped? A: The gains are real for well-scoped tasks—think boilerplate code, refactoring, and test generation. The risk is quality cliffs: subtle bugs, security lapses, or mismatch with domain nuances. Pair speed boosts with hybrid testing (automation plus human exploration) and strict CI/CD gates.

Q: What standards or frameworks should companies adopt for AI safety? A: Start with the NIST AI RMF and OECD AI Principles. Use model cards for transparency and consider policies akin to Anthropic’s Responsible Scaling Policy. Tailor controls by use-case risk.

Q: What’s a single, high-impact step enterprises can take right now? A: Establish an AI launch gate: no deployment without documented risk assessment, red-team results, rollback plans, and an accountable owner. It creates a repeatable safety muscle that scales with your portfolio.

Q: How should investors diligence AI startups in this environment? A: Look beyond demos. Ask for 24/7 CFE strategies, model evals, safety incident SLAs, and a realistic compute plan. Verify customer distribution and data moats; check that speed doesn’t outpace governance.

Clear takeaway: The next epoch of AI won’t be decided by model size alone. It will be won by those who can secure clean power, codify safety, and scale responsibly—turning infrastructure and integrity into enduring competitive advantages.

Discover more at InnoVirtuoso.com

I would love some feedback on my writing so if you have any, please don’t hesitate to leave a comment around here or in any platforms that is convenient for you.

For more on tech and other topics, explore InnoVirtuoso.com anytime. Subscribe to my newsletter and join our growing community—we’ll create something magical together. I promise, it’ll never be boring!

Stay updated with the latest news—subscribe to our newsletter today!

Thank you all—wishing you an amazing day ahead!

Read more related Articles at InnoVirtuoso

- How to Completely Turn Off Google AI on Your Android Phone

- The Best AI Jokes of the Month: February Edition

- Introducing SpoofDPI: Bypassing Deep Packet Inspection

- Getting Started with shadps4: Your Guide to the PlayStation 4 Emulator

- Sophos Pricing in 2025: A Guide to Intercept X Endpoint Protection

- The Essential Requirements for Augmented Reality: A Comprehensive Guide

- Harvard: A Legacy of Achievements and a Path Towards the Future

- Unlocking the Secrets of Prompt Engineering: 5 Must-Read Books That Will Revolutionize You