Why Delta’s Ed Bastian Says Calling It “Artificial Intelligence” Is a Mistake—and What That Means for Aviation

If you wanted to make people nervous about new technology, what would you call it? According to Delta Air Lines CEO Ed Bastian, the very phrase “artificial intelligence” is the problem. In a recent interview with Fortune, he argued that the word “artificial” primes employees and customers to expect dystopia—not better operations, safer flights, or friendlier service. And in a safety-critical industry like aviation, he believes that matters a lot.

This isn’t just a semantic squabble. It’s a strategic shift in how leaders frame a technology that’s already powering flight routing, predictive maintenance, and customer interactions across the travel industry. Under Bastian, Delta has poured resources into machine learning and generative AI while carefully avoiding overhyped labels. The aim: build trust by positioning AI as intelligent augmentation—not replacement.

Let’s unpack why the label “artificial intelligence” can stall real-world adoption, how Delta’s approach fits a larger business trend, and what any enterprise can learn about rebranding and responsibly integrating AI.

For context, you can read the Fortune interview here: “I think it’s a mistake”: Delta CEO Ed Bastian refuses to call it “artificial intelligence”.

The branding problem no one saw coming

“Artificial intelligence” is a decades-old term, born in labs and lore. It evokes humanoid robots, science fiction narratives, and, more recently, spectacular breakthroughs—and risks. In boardrooms and breakrooms, those connotations can do real damage.

- “Artificial” sounds inauthentic, alien, and unpredictable.

- AI is often portrayed as a job-taker, not a teammate.

- Media narratives emphasize catastrophic failure modes, not everyday wins.

Bastian’s point is simple: words steer emotions, and emotions steer adoption. If the term triggers fear, organizations delay deployment or quietly limit scope. In industries like aviation—where safety is non-negotiable and employees are deeply specialized—skepticism can become a hard stop.

His solution: reframe the technology so teams see it as an assistant that amplifies human expertise. That’s not hand-waving; it’s a change-management tactic that lowers cognitive and cultural barriers.

Why language matters more in aviation

Airlines operate complex systems under extreme constraints: weather, fuel prices, air traffic control, volatile demand, and razor-thin margins. Any tool that improves reliability, safety, or efficiency is valuable. But adoption requires trust at multiple layers:

- Frontline crews must trust recommendations.

- Operations and maintenance teams must trust predictive signals.

- Customers must trust communications during disruptions.

- Regulators must trust governance and controls.

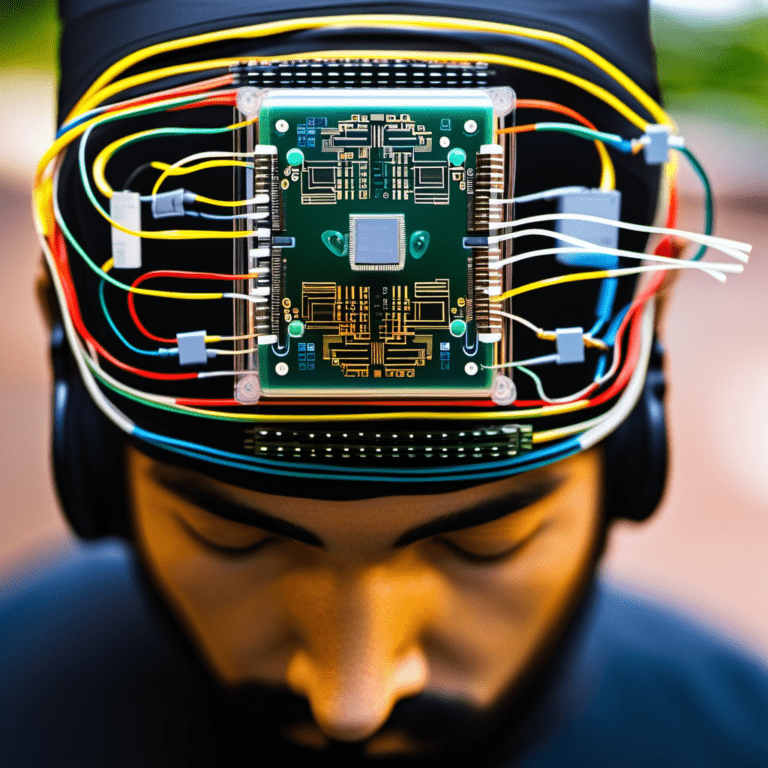

Calling it “artificial intelligence” subtly suggests removing humans from the loop. In a cockpit, on a ramp, or in a network operations center, that’s a non-starter. Framing it as “intelligent assistance,” “augmented intelligence,” or simply “advanced analytics” leaves room for what aviation already does best—structured procedures, human oversight, and continuous improvement.

It’s no accident many tech products emphasize co-pilot metaphors. See Microsoft’s productivity suite under the Copilot banner. The promise is not “we’ll do it for you,” but “we’ll help you do it better.”

Inside Delta’s playbook: augment, don’t replace

From Bastian’s perspective, trust is Delta’s competitive edge—both with customers and employees. According to the Fortune piece, Delta:

- Invests heavily in machine learning for operations optimization and maintenance forecasting.

- Uses generative AI internally for chatbots and data analysis to speed up support and insights.

- Partners with major tech providers (such as Microsoft and Nvidia) while keeping expectations grounded to avoid spooking the workforce.

- Positions AI as job-enhancing, not job-erasing, to keep Delta a “great place to work.”

Reframing AI this way doesn’t just soothe nerves. It aligns incentives.

- Skilled employees lean in when they feel supported, not sidelined.

- Leaders can credibly say “we’re using tools to remove drudgery” rather than “we’re automating you.”

- Customers benefit from faster, clearer answers without fearing a faceless system.

For a look at the safety-first ethos that underpins aviation culture, explore the FAA’s safety management approach: FAA Safety Management System.

Beyond Delta: a widening industry conversation

Bastian’s stance isn’t in isolation. Leaders across AI and enterprise technology increasingly emphasize alignment, safety, and human oversight:

- Anthropic highlights model safety and constitutional AI, a narrative shaped by CEO Dario Amodei and others.

- OpenAI publishes safety updates, usage policies, and risk mitigation strategies.

- Google DeepMind focuses on responsible AI principles.

The signal is clear: positioning AI as a responsible partner is now part of mainstream brand strategy. That’s especially true where mistakes are costly, like aviation, healthcare, and finance.

What “intelligent augmentation” really looks like in aviation

Let’s strip away the jargon and talk about tangible workflows where AI-grade analytics already add value—often quietly:

- Predictive maintenance: Models mine sensor data, logs, and environmental conditions to flag components likely to fail before the next scheduled check. This reduces AOG (aircraft on ground) events and keeps fleets flying safely and efficiently.

- Route and fuel optimization: AI-enhanced planning sifts through weather patterns, jet streams, and traffic constraints to recommend routes that save fuel and time while preserving buffers for safety.

- Irregular operations (IROPs): During disruptions, decision engines can simulate cascading effects of delays and reassign gates, crews, and aircraft to minimize knock-on chaos—while humans retain final say.

- Crew pairing and rostering: Complex constraints (duty time, qualifications, base locations) benefit from optimization models that also respect quality-of-life parameters.

- Customer service and ops support: Generative AI can surface relevant policy, fare rules, and rebooking options to agents quickly, or handle routine chat queries 24/7, handing off complex cases to people.

- Safety analysis: Advanced pattern detection can highlight unusual trends in incident reports or FOQA data to support proactive interventions.

For broader industry context, see: – IATA on digital transformation in aviation: IATA Digital Transformation – McKinsey on AI’s operational value in travel and logistics: McKinsey AI in operations

The psychology of adoption: fear slows, framing speeds

If you tell employees “AI is coming,” they hear “jobs are going.” If you tell them “we’re rolling out decision support tools that cut grunt work,” they hear “you’ll go home earlier—and with less stress.”

This isn’t spin. It’s how humans process change:

- Loss aversion: People weigh potential losses more heavily than equivalent gains. “Artificial intelligence” is framed as loss-risky.

- Control bias: Teams accept new tools faster if they keep agency. “Assistance” implies agency; “automation” implies loss of control.

- Social proof: Success stories from peers reduce anxiety. Quiet pilots, limited launches, and internal champions matter more than glossy slogans.

Bastian’s approach recognizes that the first promise of AI should be to your people, not your P&L. Deliver on that, and the P&L often follows.

The safety and governance layer: necessary, not optional

Rebranding only works if it reflects reality. In aviation, that means rigorous guardrails:

- Human-in-the-loop by default for safety-critical decisions.

- Clear provenance of data sources and robust MLOps practices.

- Monitoring for model drift and bias, with thresholds and rollback plans.

- Incident reporting paths and ethical guidelines.

- Training programs so frontline teams understand capabilities and limits.

Even the best narrative can’t compensate for weak controls. For reference points on responsible deployment, see: – Anthropic Safety – OpenAI Safety – Google DeepMind Responsible AI

And for infrastructure partners powering enterprise AI workloads: – Microsoft Copilot and Azure AI – NVIDIA AI Enterprise

The regulator’s dilemma—and the messaging mismatch

Bastian’s remarks also underscore a friction point: some policymakers lead with worst-case scenarios to justify sweeping rules. For business leaders, that can feed public skepticism and stall sensible adoption. The solution isn’t to dismiss risk; it’s to translate it:

- Acknowledge credible risks and show your controls.

- Avoid vague reassurances; offer measurable commitments and audits.

- Treat safety narratives as investments in adoption, not delays.

In other words, calm beats cavalier. You can tell a safety story while shipping real products—aviation has done it for decades.

Avoid the hype cycle: the “quiet compounding” strategy

The travel industry has been burned by buzzwords that promised revolutions and delivered edge cases. AI is different not because it’s flashier, but because it compounds:

- A small uplift in on-time performance yields outsized loyalty gains.

- A slight reduction in unscheduled maintenance ripples into cost savings and better asset utilization.

- Faster customer responses turn tense moments into brand-building ones.

The trick is to roll up those 1–3% improvements across many processes. That compounding is only possible if the organization buys in—and that happens when teams feel helped, not hunted.

What to call it instead? Names that calm, not alarm

Is renaming purely cosmetic? Not if it triggers the right mental model. Consider these neutral-to-positive labels:

- Intelligent assistance

- Decision support

- Augmented intelligence

- Machine learning-powered tools

- Predictive analytics

- Co-pilot for [function] (e.g., “co-pilot for maintenance planning”)

Notice what each phrase does: it points to a clear benefit, preserves human agency, and avoids science-fiction vibes. It’s not about hiding AI; it’s about clarifying its role.

A practical communications playbook for leaders

If you’re rolling out AI across a large, regulated enterprise, steal a page from Delta’s approach:

- Start with purpose, not tech – “We’re improving safety, reliability, and employee experience” beats “We’re launching AI.”

- Rename the tool to match the job – Pick domain-first labels like “crew pairing assistant” rather than “AI optimizer.”

- Show, don’t tell – Pilot with a respected team. Share before/after metrics and testimonials from peers.

- Keep people in control – Default to human approval for consequential actions. Make override easy.

- Train for literacy, not wizardry – Teach capabilities and limits. Offer cheat sheets. Normalize “I don’t know—let’s check.”

- Publish guardrails – Document data sources, privacy protections, and response plans. Invite feedback.

- Reward adoption stories – Celebrate teams who use the tools to fix pain points. Peer signals travel farther than memos.

- Measure what matters – Track reliability, NPS/CSAT, safety KPIs, employee engagement—not just cost savings.

The workforce question: replacement vs. elevation

Layoffs in tech and union worries make it easy to assume AI equals fewer jobs. Bastian’s narrative pushes in the opposite direction: AI should eliminate low-value work and elevate human roles.

In aviation, that looks like:

- Agents spending less time hunting policies and more time solving edge cases with empathy.

- Maintenance crews acting on higher-confidence insights, reducing rework and stress.

- Operations leaders getting better foresight during bad weather so they can protect rest windows and service integrity.

- Data analysts moving from ad hoc pulls to higher-order modeling and decision support.

The “great place to work” aim isn’t just HR branding. It’s a pragmatic bet that happy, trusted teams deliver better, more resilient operations. For perspective on workplace culture benchmarks, see Great Place To Work.

The customer angle: when AI earns trust at the gate

Customers don’t care what you call it if the experience improves. But naming still matters when things go wrong. During disruptions, the difference between “a bot said no” and “our system found three options and I can approve one right now” is enormous.

AI that’s framed—and designed—as a teammate to frontline staff can:

- Speed rebooking without trapping customers in loops.

- Offer personalized alternatives that reflect status, preferences, and constraints.

- Keep communication timely and consistent across channels.

Done right, the tech fades into the background. What remains is a sense that the airline is competent, transparent, and on your side.

Foundation models behind the scenes: tool, not talisman

The Fortune interview notes that as foundation models become infrastructure in logistics-heavy fields, the issue isn’t if they’ll be used—it’s how. The most successful deployments avoid magical thinking:

- Use large models for language-heavy tasks (agent guidance, documentation, schedule explanations).

- Use specialized models for forecasting, optimization, and anomaly detection.

- Chain systems with clear handoffs and confidence thresholds.

- Log, monitor, and continually retrain for your data and edge cases.

This is the opposite of “let’s sprinkle AI.” It’s the discipline of systems engineering applied to modern ML.

KPIs that prove progress without the hype

If you’re rolling out “intelligent assistance,” pick a small set of metrics, baselined and audited:

- Safety: leading indicators from incident trends; maintenance-induced delays.

- Reliability: completion factor; on-time performance vs. weather/ATC-adjusted baselines.

- Efficiency: fuel burn variance vs. plan; forecast accuracy for demand/crew pairing.

- Customer: CSAT/NPS during IROPs; first-contact resolution.

- Workforce: adoption rates; time-to-competence; engagement scores; attrition in key roles.

Report gains modestly and consistently. Compound them quarter over quarter. Let the results, not the rhetoric, do the selling.

So, is “artificial intelligence” really the wrong name?

If the goal is adoption in high-trust, high-stakes environments, yes. “Artificial” sets the wrong expectation. It suggests the system replaces human judgment rather than helping it. That’s why executives like Ed Bastian advocate swapping out the label for something that signals partnership.

Is that mere marketing? Only if the underlying practices don’t change. When the substance is there—human oversight, measurable gains, responsible rollout—the new label helps teams see what the tech actually is: a tool to make them better at their jobs.

The bigger story: culture eats algorithms for breakfast

Bastian’s comments tap into a truth many companies are relearning. AI wins are less about model novelty and more about organizational trust. The leaders who will extract durable value from AI aren’t just the ones with the biggest clusters. They’re the ones who can shape narratives people believe, back them up with governance, and deliver small, compounding improvements.

That’s how you turn a buzzword into a backbone.

For the full Fortune interview, read: Delta CEO Ed Bastian says calling it “artificial intelligence” is a branding mistake.

FAQs

Q: Why does Ed Bastian think “artificial intelligence” is a bad label? A: He argues the word “artificial” triggers fear in employees and customers, especially in safety-critical fields like aviation. It suggests replacement and loss of control. Reframing AI as assistance or augmentation makes adoption easier.

Q: Isn’t rebranding AI just spin? A: Not if the operations reflect it. Renaming the tool to match its function (“decision support,” “co-pilot,” “predictive analytics”) clarifies roles and preserves human oversight. The label helps people form the right mental model.

Q: What AI use cases make sense for airlines right now? A: Predictive maintenance, route and fuel optimization, IROPs planning, crew scheduling, agent assist for customer service, and safety trend analysis. These are all augmentation-first workflows that keep humans in control.

Q: Will AI take aviation jobs? A: Bastian’s stance is that AI should remove low-value tasks and elevate skilled roles. The near-term opportunity is productivity and quality-of-work improvements, not wholesale replacement—especially where human judgment and certification are central.

Q: How can airlines deploy AI responsibly? A: Use human-in-the-loop controls, robust MLOps, clear data governance, bias and drift monitoring, transparent policies, and frontline training. Publish guardrails and measure outcomes beyond cost savings—safety, reliability, and employee engagement count.

Q: What should we call AI internally to reduce resistance? A: Favor domain-first names like “maintenance planning assistant,” “crew pairing co-pilot,” or “decision support.” Avoid catch-all “AI” labels in frontline workflows unless you’re describing the capability in plain terms.

Q: How does this align with broader AI industry thinking? A: Many leaders emphasize safety and responsibility. See resources from Anthropic, OpenAI, and Google DeepMind. The trend is to present AI as a controlled, human-centered system.

Q: What’s the next frontier for AI in aviation? A: Deeper integration of predictive and optimization models into day-of-operations decisioning, richer agent-assist for complex irregular operations, and continuous-learning systems with strong governance. The emphasis will remain on augmentation and reliability.

The takeaway

Words shape adoption. In aviation and other high-stakes industries, calling it “artificial intelligence” can backfire—fueling fear instead of trust. Delta CEO Ed Bastian’s message is pragmatic: rename the tool, reframe the goal, and reinforce the culture that keeps humans in charge. Do that, and AI becomes what it should have been all along—a co-pilot for better decisions, safer operations, and smoother journeys.

Discover more at InnoVirtuoso.com

I would love some feedback on my writing so if you have any, please don’t hesitate to leave a comment around here or in any platforms that is convenient for you.

For more on tech and other topics, explore InnoVirtuoso.com anytime. Subscribe to my newsletter and join our growing community—we’ll create something magical together. I promise, it’ll never be boring!

Stay updated with the latest news—subscribe to our newsletter today!

Thank you all—wishing you an amazing day ahead!

Read more related Articles at InnoVirtuoso

- How to Completely Turn Off Google AI on Your Android Phone

- The Best AI Jokes of the Month: February Edition

- Introducing SpoofDPI: Bypassing Deep Packet Inspection

- Getting Started with shadps4: Your Guide to the PlayStation 4 Emulator

- Sophos Pricing in 2025: A Guide to Intercept X Endpoint Protection

- The Essential Requirements for Augmented Reality: A Comprehensive Guide

- Harvard: A Legacy of Achievements and a Path Towards the Future

- Unlocking the Secrets of Prompt Engineering: 5 Must-Read Books That Will Revolutionize You